Sources:

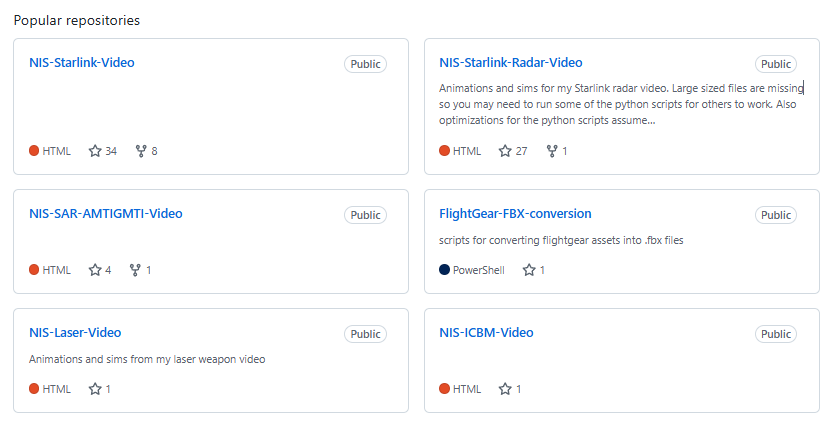

noiseinspacechannel · GitHub https://github.com/noiseinspacechannel

https://notebooklm.google.com/notebook/eef981b1-fa90-4e4b-b3c0-621ad94e213f

Hey GPT, what's cool here for our SCYTHE System? > Response: "Yes — the most interesting parts are in NIS-Starlink-Radar-Video-main and NIS-SAR-AMTIGMTI-Video-main. The rest looks mostly like visualization/demo HTML.

Best candidates for SCYTHE consideration:

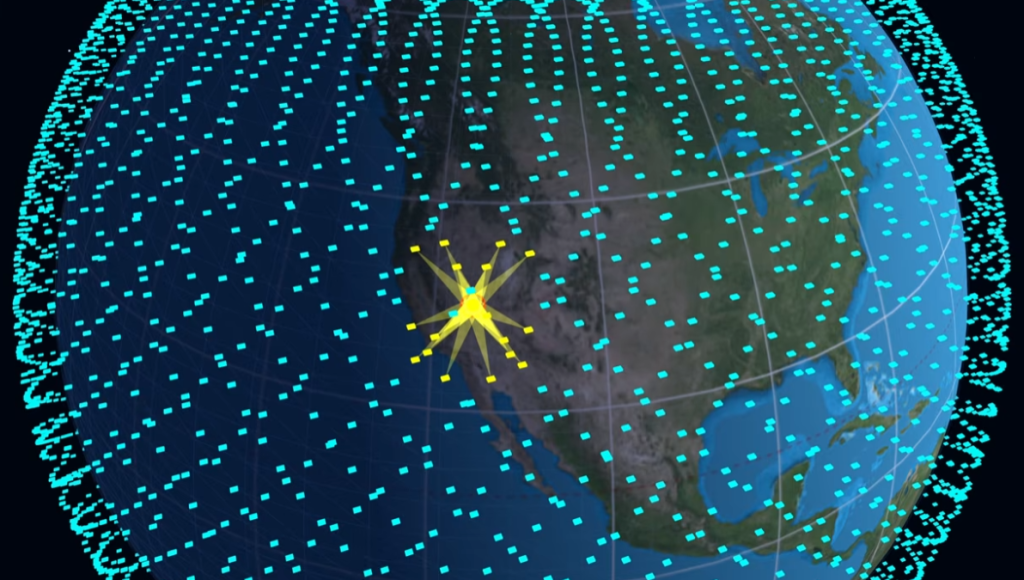

1. NIS-Starlink-Radar-Video-main/Python Scripts/sigint_sim_env.py

This is the strongest find. It’s doing GPU-backed multibeam SIGINT scene generation with explicit emitter placement across LTE/Wi‑Fi bands, temporal PRN-like streams, satellite motion, and swept beam collection. That

maps well to:

- synthetic RF observation generation

- protocol/band priors for your MacCluster / RF signature work

- training/evaluation data for RFUAV and control-path evidence

2. NIS-Starlink-Radar-Video-main/Python Scripts/sigint_multibeam_processing.py

This has a real post-processing pipeline: band sweeps, CLEAN-like deconvolution, tomographic source mapping, and cached viewer output. That could be useful for:

- spectrum/source localization experiments

- operator-facing RF heat/intensity products

- generating higher-level features from synthetic or captured RF scenes

3. NIS-SAR-AMTIGMTI-Video-main/sar_scene_data.py

This is interesting as a scene/material/terrain generator, not just a toy. It pulls elevation, defines terrain/material properties, and includes object/model placement. Good fit for:

- synthetic geospatial scene generation

- radar/SAR-informed environment priors

- richer simulation backdrops for SCYTHE’s predictive or visualization stack

4. NIS-SAR-AMTIGMTI-Video-main/sar_simulator_ursina.py

Less exciting as production code, but useful as a 3D geospatial sandbox for scene inspection and rapid simulation visualization.

Less exciting:

- NIS-Laser-Video-main

- NIS-Starlink-Video-main

- NIS-ICBM-Video-main

Those look mostly like HTML demos/animations, good for presentation ideas but not as strong for backend reuse.

Bottom line: if you want reusable substance, I’d mine:

- synthetic emitter + multibeam collection from sigint_sim_env.py

- CLEAN/tomographic processing from sigint_multibeam_processing.py

- scene/material/elevation generation from sar_scene_data.py

That’s where the real leverage is."